What exactly is meant by long term preservation? What is it really different from a secure backup?

In the computer world, the term “archiving” encompasses many meanings today. So let’s say quickly what long term preservation is not:

- Long term preservation is not backup. It is not solely preserving the bitstream of a file.

- Long term preservation is not an HSM service – Hierarchical Storage Management – which migrate files to tape to save space on disk.

- Long term preservation is not the ultimate step of storing data before oblivion or permanent loss.

Long term preservation of digital documents has three main objectives:

- Keep the document

- Make it accessible

- Preserve intelligibility.

These three services are designedin the long term, that is to say 30 years or more.

Keep the document in time? This is the most obvious function that is required of a repository. This should ensure that the document is always available on the storage media and retains its integrity.

Access to the document? In fact, preservation without communication would be perfectly unproductive. This means you can find the document on the storage media and read it (= open the file).

Preserve the intelligibility of the document? This is to ensure that the document is understandable to potential users through time. This goal may look ambitious, yet irrelevant. Ambitious, it is without a doubt. But it is the core of an archivist activities. That fact that the document – or even archivist – is now “digital” does not change anything to the challenge.

Secure backup (or storage) only takes into account the first two goals on three listed above and only in the short and medium terms.

The time scale is therefore a major parameter to solve the problem. If the horizon is about a 10 years span, the problem is – relatively – easy to manage. Indeed, a secure, good quality computer storage prevents accidental loss of material. Technologies will probably not evolve in such a dramatic way that the documents will become irreversibly unreadable. And furthermore, the community of potential users will probably be close enough, scientifically and culturally speaking, from the authors who created the paper 10 years earlier, to understand it.

If we move the cursor to a span of 30 years or more, none of the above is assured anymore if no support actions have been put in place. This is indeed the very long term aspect which is the core challenge of long term preservation.

The preservation issues over the long-term: technological obsolescence

Let’s make a quick inventory of the difficulties we might face to read, for example 10 years later, a file for which no actions have been taken for preservation.

Your file is 10 years old :

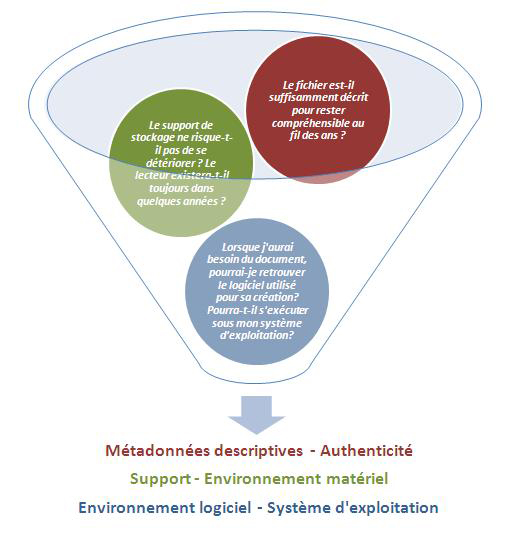

Through this example, we identify four key risks facing inevitably a file:

- Hardware obsolescence,

- Software obsolescence

- File format obsolescence,

- Loss of the meaning of the content.

Doing long term preservation is implementing the necessary means to mitogate the impact of these threats. What are these means ?

Against the degradation and aging of the media, multiple copies of archived documents are required, diversifying storage technologies when possible. Ideally, two copies should be kept in one site (one disk, one tape), and another copy in a remote site (on tape). But those precautions alone will not suffice. It will also be necessary to refresh (i.e.renew) regularly the technology used for storage support by replacing old media with more recent media. Again, it will be safer to apply the precautionary principle. If a deemed manufacturer guarantees the usability of its technology for 10 years, there is little doubt that the media has been tested in labs and is reliable over a period of 20 years. Though, the refresh threshold for the media should be … 5 years, i.e. half of life guaranteed by the supplier.

Against the end of life of hardware or software, some technological and economic watching should be put in place and proactively alert in case of upcoming obsolescence. On this particular topic as on others, it is strongly recommended to favor the standards and avoid dependency towards proprietary solutions.

In the event the obsolescence of a given software becomes a fact, emulation may be a solution, although very expensive. It consists in recreating the system environment of a software to run it again. The technique is mainly used today to revive the first video games, e.g. Pacman.

To avoid the deadlock of obsolete file formats, only sustainable formats should be preserved. What is a durable format? It is essentially a published format, i.e. a format which specifications are freely available. In the most extreme cases, only a published format may allow a developer to write specific program to edit the file again. A durable format is a format widely used, or called to become. A durable format is a format widely used, or on the way to become one, and eventually a standard. Given these criteria, we can list the main formats currently considered as sustainable: check the article on the List of archive formats by PAC platform (FACILE).

Note that choosing a durable format for preservation does not remove the risk that one day this format will become obsolete: it just delays the day when conversion will be required to ensure the readability of a file: the document will be converted from its initial format to a new one, while preserving the content.

To overcome the final obstacle – the lack of documentation – the solution lies in one word: metadata. Metadata is, literally “data about data” and represents all the information necessary to describe the document, which will have to be preserved along with the document to ensure its intelligibility in the future.